We are flabbergasted! Interest in LHC@home 2.0 has exceeded our wildest dreams, following the huge

press coverage that a brief mention in a

CERN press release got us. Thank you, everyone! Yesterday we reached

nearly 8000 registered volunteers, which pushed the number of computers simultaneously connected to our server well over 1000. To give you an idea, with just 100 simultaneous connections we had already reached the equivalent of all the computing power at CERN that Test4Theory project physicists have access to. So getting more than 10x that in just a few days boggled our minds - and also bogged down our servers! Since yesterday, we had to put further eager participants on hold while we sort out how to handle this huge amount of support. You can read a more detailed technical summary of the problems we encountered - and how we are fixing them -

here.

We're going to open gradually to more participants in the near future. We'll then stabilize for a while before further increases. This was announced as a beta-test to explore the limitations of our system, and we certainly succeeded in doing that, thanks to your support. Particular thanks to all our experienced BOINC users in the forums who have been patiently explaining to newcomers that this sort of thing is normal in a beta-test. And hats off to our technical crew (which is basically just

Artem and Anton in Geneva and Daniel in Madrid) who have been working literally around the clock to get the system running smoothly again. In the meantime, if you are new to the field of volunteer computing, we warmly encourage you to browse here some of the many other exciting science projects you can contribute to, using the same BOINC platform that LHC@home is running on. And if you'd like to be kept up to date on LHC@home 2.0 progress, so you can be first in line when we are ready to accept more volunteers, just subscribe to the

RSS feed for this News list. The LHC@home 2.0 Team

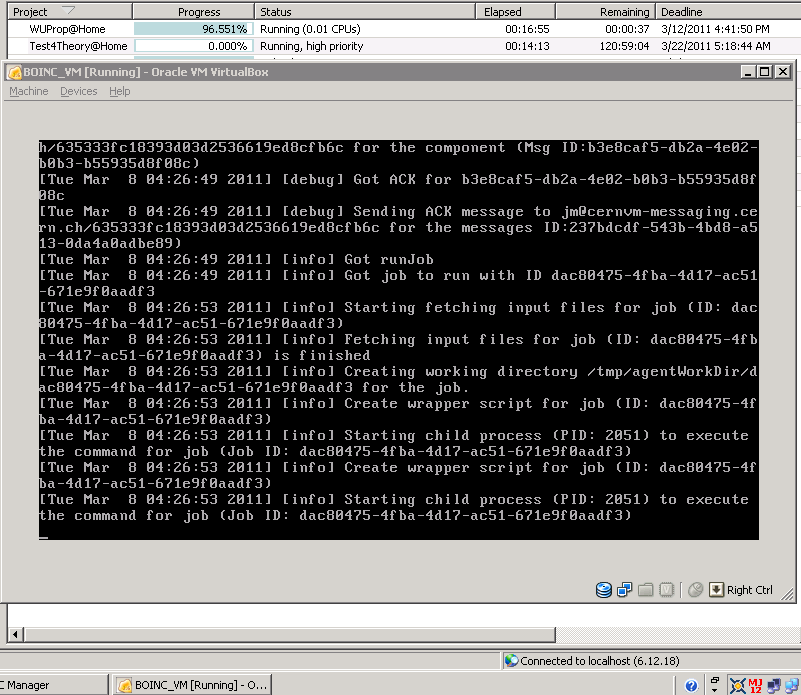

Dear All, Following unexpected posts on major news sites on 11th of August we have started seeing the exponential growth of number of connected virtual machines. Every minute several new virtual machines were popped up. Just to give you a rough idea, before, when we were only on major tech blogs and news sites the shape of the curve which was showing the number of active virtual machines looked like this, after BBC and MSNBC wrote about LHC@home the shape of the curve in no time became like this. Some of connected virtual machines apparently had problems with the application software (causes are still under investigation), and so they were getting jobs from our queues, were failing to run them, were reporting them back and were asking for more jobs. CernVM Co-Pilot (the framework that we use to distribute jobs from CERN into Virtual Machines and gather the results back) normally takes about a couple of seconds to generate a job request in a Virtual Machine, send it over to the server, pick the waiting job from the queue, prepare it, send it for execution, and start running it in the Virtual Machine. So, as you can imagine, with about 1000 active virtual machines, out of which several were 'rogue' and were basically doing nothing but draining the job server, our queues became empty very fast. We quickly ended up with queues, which were draining faster than our scripts could possibly feed them. Normally, this would not have not been a problem at all: Virtual Machines were not supposed to jump on servers all at once when the queues were empty. There is a built-in mechanism which would make them exponentially back off, precisely for the reason of not overloading our servers. Everything would have been good, if we did not have a bug which prevented our exponential back off algorithm from working, and instead turned all our virtual machines into cannons which were firing at our server hundreds of requests in a second. Because of that we had to turn our servers down and empty the BOINC server queue (has nothing to do with the Co-Pilot queue). This meant that BOINC clients would not pop up new Virtual Machines anymore. It took a while to figure the problem out, the updated code was pushed into CernVM File System repository about 2AM on August 12th (GMT +2), and the server was configured to prevent agents with the buggy code from connecting at about 10 AM on August 12th (because the bug is still there and they would still be flooding us). We put an announcement on the forums asking users to reboot (to make sure they pick up the updated code). After that the system started working again. Currently there are about 300 concurrently active machines. These are the users who got virtual machines before we emptied BOINC server queue and who rebooted them after our announcement. We are currently planning to slowly start adding new Work Units to BOINC server queue (100 at a time), which means that virtual machines should start to pop up on registered users' machines soon. Our initial goal was to recruit about 2,000 volunteers (remember, we just wanted to do a Beta test) so that several hundreds of them would be active all the time. We carried out alpha tests with about 300 registered volunteers (which would peak up to 100 online volunteers). As you will soon read in the other, more general announcement that we are about to publish on our main page, we are already very close to having 8,000 registered volunteers. We will try to slowly ramp that number up to 10,000 after which we plan to stabilize for a while before future increases. We would like to ask everyone to remember that we are still in Beta testing phase. Which means that outages like this are likely to happen again, in fact we do expect them to happen again. To discover and eliminate bugs we together with you are intentionally pushing the system well beyond its limits. Last but not least, we would like to thank you all of you again, for your enthusiasm, help, patience and understanding!!! Artem, on behalf of LHC@home 2.0 Team.